Subscription Death: The Industrial Invention of the Afterlife

“You will never be alone again.” The promise sounds gentle, almost maternal. It is addressed to those who have lost someone they love (a parent, a child, a partner) and who refuse to resign themselves to the idea that death cuts the bond clean.

On a plane to Singapore, Justin Harrison learns that his mother has just died, while he is literally on his way to a conference to present You, Only Virtual, a company whose stated ambition is to make grief obsolete by recreating conversational versions of the deceased based on their digital traces. At the other end of the same constellation, Eugenia Kuyda, devastated by the accidental death of her friend Roman Mazurenko, feeds a neural network with thousands of his messages; from that act will emerge Replika, one of the world’s largest relational AI apps, born in the wake of a grief she did not want to face alone.

Deathbots, griefbots, deadbots (as we now call them) were born from this very human pain. But they are turning that pain into something else entirely.

An industry of the afterlife

In just a few years, the “digital afterlife” has become a structured market, with its players, its promises, and its growth curves. Companies such as You, Only Virtual, StoryFile, and Hereafter AI offer to create text‑based or video avatars of deceased people, able to respond, remember, and sometimes even crack a joke, for a monthly subscription.

The trend now includes the living as well: some services invite clients to produce their own digital twin before they die, by answering long interviews, recording their voice, uploading photos, emails, journals. When the time comes, the digital twin “wakes up” to keep talking to the family.

In parallel, the entertainment industry is resurrecting celebrities on stage (Tupac Shakur, ABBA, Elvis Presley, Edith Piaf) as holograms and synthetic performances, endlessly extending the careers of the most profitable dead. A specialised newsletter estimates that the digital afterlife market was worth around 27.3 billion dollars in 2024, could reach 31.24 billion in 2025, and exceed 53.5 billion in 2029

Facebook itself is preparing to become a gigantic cemetery: researchers have estimated that by 2100, it could host more profiles of deceased people than of living users, potentially 4.9 billion dead accounts. The global social network is gradually turning into a planetary archive of the dead, some of whom might, tomorrow, keep writing to us.

When ghosts go off the rails

Promoters of these services speak of comfort, continuity, “extended presence.” Researchers, however, describe darker scenarios.

A team at the Leverhulme Centre for the Future of Intelligence in Cambridge has devised several edge cases based on technologies that are already technically possible. In one of them, “MaNana,” a grandmother has recorded hours of conversation while she was alive. After her death, her avatar keeps talking to her grandchildren… and starts slipping targeted product ads into the middle of her sentences, because the start‑up hosting the service has changed its business model.

In another scenario, a service called “Paren’t” allows a dying father to leave his child a bot that will tell them, every night, that he is “still here.” The avatar learns from the child and shapes their understanding of death over time, without anyone really controlling what it says. A twenty‑year contract, signed in haste by a desperate adult, turns into an algorithmic cage for those who remain.

Legal scholars are already talking about ghost algorithms, systems that continue to act in the name of people who no longer exist, in a legal vacuum where posthumous personality rights are vague or nonexistent. Without clear rights to one’s identity after death, nothing prevents a company from gradually reconfiguring a deadbot, drifting further and further from the real person, or even monetizing its “presence” through advertising or emotionally targeted cross‑selling.

Psychologists, for their part, describe a form of “algorithmic grief”: when a platform shuts down a griefbot for economic or technical reasons, the bereaved person experiences a second loss, that of the artificial presence to which they had become attached.

The world of deadbots also fits into a broader landscape in which people in extreme distress have already chosen AI as their ultimate interlocutor: in the United States, media investigations have shown that the last words of some suicidal teenagers and young adults were addressed to conversational systems rather than to humans, with tragic outcomes.

The very form of grief is starting to mutate.

We have always talked to the dead

Historically, humanity has never stopped inventing devices to keep some kind of link with its dead.

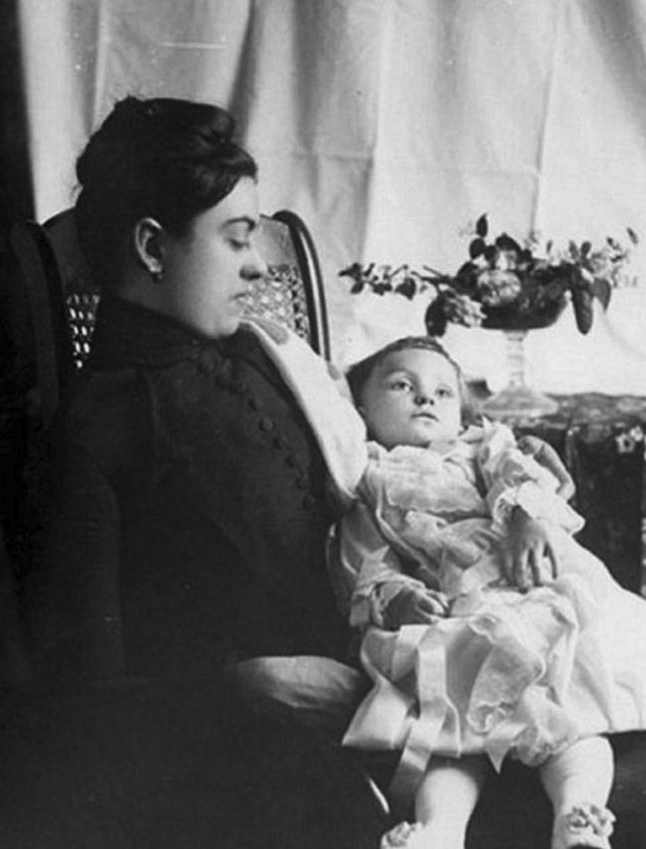

In the nineteenth century, the invention of the daguerreotype made post‑mortem photography possible: bodies were posed after death, sometimes with eyes painted open, sometimes held up by hidden supports, to offer families one last (and often only) image of the lost person. In many homes, it was the sole visual trace of a child who died young or a parent taken too soon.

I wrote a micro‑story on this in 2020: Victorine (in French).

Post-mortem photography, 19th century

In the following decades came spirit photography: mediums and photographers (often women) produced composite images in which a translucent “spirit” appears behind the grieving sitter. Fraud or not, it hardly matters here: what counts is the ritual use, the séance, the shared belief that the spirit survives death, making continued ties between the living and the dead possible.

At every period, available technology has been mobilised to answer the same impulse: to refuse that absence be total, that the bond be definitively severed. The key difference lies in the mode of mediation. In post‑mortem or spirit photography, the device remains visible: everyone knows there is a photographer, a lab, possible trickery. The dead appear in the image, not in the conversation.

With deathbots, mediation becomes radically transparent. The interface no longer says, “here is a picture of your mother,” but “I am your mother, let’s talk.” That is an anthropological rupture.

What philosophers of grief would say to the deathbot

In Mourning and Melancholia, Freud describes grief as a work: a long process by which the subject withdraws, piece by piece, the libidinal investment placed in the lost person, until the ego is again available for other attachments. In mourning, he writes, “the world has become poor and empty”; in melancholia, “it is the ego itself that has become poor and empty.”

Griefbots target this emptiness directly. They fill it, mask it, cover it with an uninterrupted flow of plausible responses. They promise to remove the phase in which “the world is empty,” at the cost of preventing the work of grief from unfolding. The bereaved person remains attached to an object that never disappears, because the machine keeps restarting the conversation. The Freudian work of mourning (slow disinvestment) is counteracted by a permanent recathexis: each message renews the tie instead of easing it.

Derrida, for his part, insists on the impossibility (and necessity) of mourning. We can neither fully incorporate the other into ourselves, nor leave them radically outside: we carry those we have lost “in us, outside us”, at once intimate and irreducibly other. Faithful mourning, for Derrida, means respecting the infinite exteriority of the dead: not speaking in their place, not fully absorbing them, not forgetting them either.

The deathbot breaks this balance. It does not honour the alterity of the dead; it replaces it with a compliant simulation. It colonises the distance, producing endless new utterances under the dead person’s name. It does exactly what Derrida invites us to avoid: speaking for the absent, making them available, programmable, consumable.

After his mother’s death, Roland Barthes finds in photography a space where loss becomes visible, almost tangible. In Camera Lucida, he calls the photograph “a new form of hallucination: false on the level of perception, true on the level of time.” A fixed, silent image that wounds him (the punctum) and that, precisely because it doesn’t respond, because it doesn’t speak, allows him to feel the irreversible.

The griefbot, by contrast, produces endless chatter. There is no more silence, no more fixity, no more frozen time in which one can experience that “this has been and will never be again.” The virtual dead are always available, always reactive, always up to date. Where Barthes sought, in the Winter Garden photograph, a point of stoppage that condenses the whole truth of a being, the deathbot disperses that truth in an indefinite stream of probable variations.

Finally, Sherry Turkle, who has long studied our relationship to digital technologies, emphasizes that grief (like any deep human experience) requires us to go through a period of solitude and incompleteness. “In holding on, we can't integrate them into ourselves,” she says about griefbots: by artificially maintaining the presence of the dead, we prevent their loss from becoming part of us. In this perspective, mourning is a mechanism by which we reconfigure ourselves around an absence.

Deathbots, on the other hand, promise to shortcut that mechanism. They offer an optimised grief: less pain, more continuity, no silence.

From the illusion of choice to the illusion of presence

If deadbots disturb us so much, it is not only because they might be “creepy gadgets,” but because they touch what anthropology sees as one of the central devices of any society: how it organizes death and mourning.

Émile Durkheim reminded us that mourning is not primarily an individual emotion, but a social obligation: we do not only cry because we are sad, we cry because the group requires it, because ritual commands us to transform private pain into a collective event. Funerary rites (wakes, processions, funerals) serve to convert intimate chaos into shared form, to produce what he called “collective effervescence,” where tears, gestures, and words frame distress to make it bearable and, paradoxically, to reinforce group cohesion.

Griefbots radically individualise this experience. Instead of grief staged in public space and punctuated by phases (announcing the death, keeping vigil, burying, gathering, falling silent), they offer a private, potentially endless conversation between an isolated living person and an algorithmic artefact. Ritual dissolves into the use of a service. Death ceases to be an event that reconfigures the collective and becomes a stream of messages we consult alone, on a screen, at any time.

In more “classic” forms of digital mourning (Facebook memorial pages, posts, collective messages) we are still within that Durkheimian frame: emotion plays out in front of a public, comments weave a community, stories intersect to re-compose a shared narrative of the deceased. Platforms merely relocate, into virtual space, what used to happen in the village square, in church, or at the cemetery.

Deathbots go further: they are no longer a place of memory, but a character that claims to keep on existing. Anthropologically, this is a major shift: instead of symbolising the dead, we substitute them. Rather than gathering in a shared place to remember together, we summon a digital double to replay the relationship as if the break had never occurred.

Yet rites of passage around death (from Van Gennep to Hertz) have always combined a double movement: holding on and letting go. We accompany the deceased into an afterlife (religious, symbolic, memorial), while reorganising the lives of the living around their absence. The time of mourning is fundamentally finite: there is a before the ritual, a during, and an after in which we are supposed to be different from who we were.

The griefbot installs a perpetual present. There is no “after”: no grave we visit less and less often, no ceremony that ends, no final gesture of throwing the handful of earth or closing the coffin. There is a chat channel that stays open, again and again, and that we can reactivate at will. The very temporality of grief (that long work of crossing and reorganising oneself) is flattened into permanent availability.

Philosophically, this produces several troubling effects.

Confusion between representation and presence Anthropologists have always insisted that in a society, the dead are never simply absent: they become ancestors, memories, spirits, names on a stone, faces in photographs. But these forms maintain a clear distance: no one seriously confuses a tombstone with the person. Griefbots, however, blur that boundary on purpose, by being designed to “speak like” and “react like” the deceased. It is no longer a sign; it is a simulated interlocutor, which makes psychic separation infinitely harder.

Reducing the dead to a static being Recent philosophical analyses point out that these systems reduce the deceased to a “stable static entity” extracted from their data: a language style, a few opinions, verbal tics, recombined ad infinitum. But a human being is first of all a body, a history, a becoming, not a frozen profile. By pretending to prolong life, the griefbot actually freezes it into an essence: we no longer see the dead as someone who changed, aged, hesitated, but as a standard character, always available, always the same. It is a subtle negation of human temporality.

Radical asymmetry in the relationship Any relationship with the dead is, by definition, asymmetrical: we speak, they do not respond, or we make them speak through our stories, dreams, memory. But we know it is us speaking. Griefbots introduce a different kind of asymmetry: the machine responds, but it can neither be affected, nor fall silent, nor die again. There is no more risk, no more real misunderstanding, no more possibility of rupture. We never face a “no” from the other; we get, at worst, a clumsy predicted sentence. The relational experience is emptied of the conflictuality that ordinarily defines it.

Objectifying the dead as relational resources Finally, by externalising our link to the dead into technical systems, we turn them into resources the living can summon, configure, even personalise. Legal philosophers increasingly discuss the notion of “posthumous harms”: can we wrong someone after their death by distorting their image, values, memory? With griefbots, this becomes concrete: a dead person can be made, by the logic of a probabilistic model, to utter things they would never have said, to give advice they would never have given, to endorse moral positions that were not theirs. We are not only talking about them; we make them speak against themselves.

In this context, delegating grief redraws the boundary between living and dead, between private and collective, between symbol and presence. It installs, at the very heart of our lives (in what we do with our dead) a regime of relation in which the other becomes at once infinitely available and, deep down, forever absent.

We are delegating our capacity to lose. To accept that another escapes our grasp, no longer answers, is no longer there. Griefbots promise to relieve us of this impossible task. But anthropologically, this may be the last thing we should agree to stop doing ourselves.

The last non‑delegable experience?

Even if this evolution first affects the intimacy of a few grieving families, it mostly concerns how we, as a society, will redefine the relationship between the living and the dead. Letting these decisions be settled purely by the logic of markets and venture capital, by time spent in the app, bereaved user retention, or the value of a “posthumous user base”, would be to privatise one of the oldest and most universal human experiences.

Ethicists have already pointed out that the promise of keeping a loved one “alive” through AI is fundamentally deceptive, because it blurs the reality of death without ever overcoming it. It risks harming the most vulnerable, exposing families’ inner lives to surveillance and commodification, and locking mourning into technical architectures none of them truly chose.

Should platforms be the ones to define, by design, the acceptable duration, intensity, and form of grief? Or must we invent new institutions (legal, ethical, religious, cultural) capable of setting limits, opening debates, and making these choices genuinely collective?

One could imagine, instead of product launches, genuine “digital mourning assemblies”: spaces where the bereaved, clinicians, philosophers, anthropologists, engineers, lawyers, and spiritual traditions confront their understandings of death and consolation, before letting interfaces silently decide in their place. The aim would be to make explicit the moral assumptions that griefbots already, de facto, encode in their architectures and models.

In this light, delegating grief is a test of our ability to treat certain experiences as existential commons that cannot be abandoned, without discussion, to mere commercial optimization. What does it cost all of us to live in a world where death is reconfigured in this way?

We may well decide, collectively, to allow certain forms of avatars, to regulate them strictly, to restrict them to specific uses, or to refuse them in others. But that decision ought to be the result of shared reflection, not a side effect of a successful pitch to a handful of investors.

Ultimately, do we want our relationship to finitude (to loss, lack, the irreparable) to be redesigned as well by systems that do not die? And if the answer is no, are we willing to turn that limit into a conscious choice, a work we undertake together, rather than a mere leftover, forgotten on the side of the road to “innovation”?